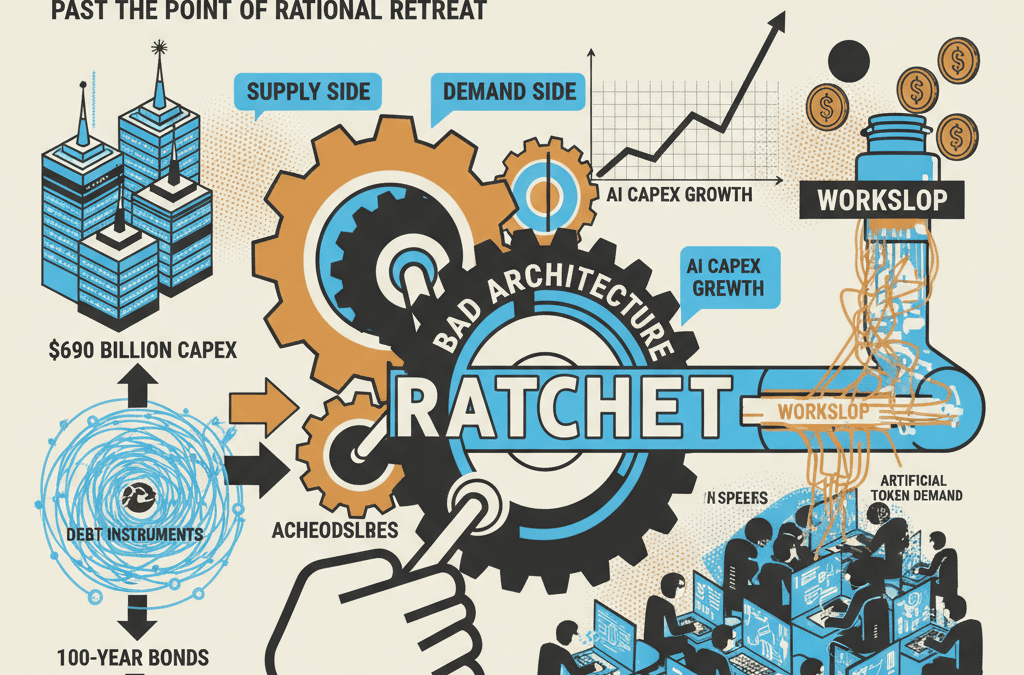

Executive Summary

The prevailing debate frames AI infrastructure spending as either visionary investment or speculative bubble. Both framings miss the structural reality. What we are witnessing is a ratchet — a mechanism that only tightens and cannot reverse. On the supply side, hyperscaler capex has reached $690 billion annually, consuming nearly 100% of operating cash flow, and the debt instruments financing it — including Alphabet’s 100-year sterling bond — make retreat more expensive than continuation. On the demand side, enterprises are consuming that compute at scale through agentic AI deployments, but the overwhelming majority are producing what researchers call “workslop”: low-quality output that generates artificial token demand indistinguishable from productive use on hyperscaler dashboards.

The convergence is the insight: bad enterprise architecture sustains the capex ratchet by creating demand that looks like product-market fit but functions as architectural waste. The technology works — AI-native firms prove it daily. The customers, overwhelmingly, do not. The ratchet tightens from both ends.

The Capex Ratchet: Past the Point of Rational Retreat

The numbers have moved past the range where traditional investment analysis applies. Combined hyperscaler capital expenditure — Amazon, Alphabet, Meta, Microsoft, and Oracle — reached approximately $602 billion in 2026, a 36% increase over 2025’s already-historic $443 billion. Broader estimates that include secondary infrastructure players push the total toward $690 billion. Roughly 75% of the aggregate spend targets AI infrastructure: GPUs, custom silicon, data centers, and the power systems to run them.

The cash flow picture tells the real story. Bank of America estimates hyperscalers will spend roughly 90% of their operating cash flow on capex in 2026, up from 65% in 2025 and against a 10-year average of 40%. UBS puts the current figure closer to 100%. Individual projections are stark: Pivotal Research projects Alphabet’s free cash flow to plummet from $73.3 billion to $8.2 billion. Morgan Stanley and Bank of America see Amazon turning FCF-negative, with deficits ranging from $17 billion to $28 billion. Oracle’s most recent quarter showed negative $13.2 billion in free cash flow against positive $9.5 billion a year prior.

To fund the gap between what they earn and what they spend, hyperscalers have turned to debt markets at a scale that redefines the sector. Morgan Stanley projects aggregate hyperscaler borrowing of $400 billion in 2026, more than double the $165 billion borrowed in 2025. Oracle launched a $25 billion bond offering in February to support a $45-50 billion annual financing plan. Alphabet raised $32 billion in a multi-currency debt sale completed in under 24 hours. Goldman Sachs projects cumulative hyperscaler capex from 2025-2027 will reach $1.15 trillion — more than double the $477 billion spent in the prior three-year window.

And then there is the century bond.

On February 9, 2026, Alphabet priced a £1 billion sterling bond maturing in February 2126 — a 100-year instrument at 6.125%. The offering attracted £9.5 billion in orders, nearly 10x oversubscription. The primary buyers were UK pension funds and insurance companies seeking to match long-duration liabilities. Only three entities had previously issued sterling century bonds: the University of Oxford, the Wellcome Trust, and EDF, a French regulated utility. These are institutions with centuries of continuity or government-backed revenue guarantees. Alphabet, founded in 1998 and operating in markets where competitive position shifts on 18-month GPU cycles, is not that kind of entity.

Michael Burry was direct: “Alphabet looking to issue a 100-year bond. Last time this happened in tech was Motorola in 1997, which was the last year Motorola was considered a big deal. At the start of 1997, Motorola was a top 25 market cap and top 25 revenue corporation in America. Never again.” The parallel is uncomfortably precise. Motorola’s century bond coincided with its absolute peak. Within two years, Nokia had surpassed it in mobile phones. Within three, its Iridium satellite venture — a decade-long, multi-billion-dollar bet on infrastructure — filed for bankruptcy after nine months of operation. Motorola recovered approximately 1% of its investment. By the time the iPhone launched in 2007, Motorola’s market share had collapsed from 60% to under 5%.

The century bond is not merely a financing instrument. It is a structural commitment to perpetual growth. Alphabet’s $185 billion in projected 2026 capex represents roughly 50% of revenue. The GPU refresh cycle runs 12-18 months — NVIDIA’s Blackwell chips deliver 4x the power efficiency of the Hopper generation they replace, rendering prior silicon non-competitive for frontier workloads within two years. Goldman Sachs identified a $40 billion annual depreciation charge for data centers commissioned in 2025, against just $15-20 billion in revenue at current utilization rates. The infrastructure depreciates faster than it generates the revenue to fund its own replacement.

But stopping is not an option. Bank of America strategist Michael Hartnett has identified a capex reduction announcement as the primary catalyst for a major market rotation, projecting 10-20% stock declines for any hyperscaler that signals pullback. Amazon’s stock has already fallen 12% on capex concerns in 2026; Microsoft is down 16%. No major hyperscaler has successfully reduced capex mid-cycle without losing cloud market share. The ratchet does not allow reverse.

The Workslop Ceiling: Enterprise Adoption Without Architecture

The demand that sustains the capex ratchet is real in volume. It is questionable in value. Deloitte’s 2025 Emerging Technology Trends study found that only 11% of organizations have agentic AI in production, while 42% are still developing strategy and 35% have no formal strategy at all. Gartner predicts that over 40% of agentic AI projects will be cancelled by the end of 2027 due to escalating costs, unclear business value, and integration failures with legacy systems. MIT’s NANDA Initiative found that 95% of enterprise AI pilots deliver zero measurable ROI — for every 33 proofs of concept launched, only 4 reach production.

The failure mode is not that AI does not work. It is that enterprises are deploying it without the architectural prerequisites to make it work. McKinsey’s 2025 State of AI report found that 65% of organizations now use generative AI, but only 39% report any EBIT impact — and most of those report less than 5% of their EBIT is attributable to AI. Only 11% of companies worldwide use AI at scale. The critical finding: 50% of high-performing organizations redesign workflows from scratch for AI, rather than layering AI onto existing human-designed processes. The organizations that fail — the overwhelming majority — treat AI as a tool to overlay on unreformed systems.

This is where workslop enters the picture. The term, coined by Stanford’s Social Media Lab and BetterUp Labs in a 2025 Harvard Business Review study, describes AI-generated work content that masquerades as quality output but lacks the substance to advance actual tasks. Their research found that 40% of workers received workslop in the prior month, with 15% of AI-generated content qualifying as workslop by objective measures. The cost is quantifiable: $186 per month per affected employee — and with 40% of the workforce receiving workslop, that scales to roughly $9 million annually for a 10,000-person organization. Each instance costs an average of one hour and 56 minutes to identify and remediate.

The compute economics make the problem exponentially worse. Agentic AI workflows — sub-agents calling sub-agents, verification loops, retry chains, multi-step reasoning — consume 10 to 100 times the tokens of a simple prompt-response interaction. Research benchmarks show that reflexion loops (where an agent iterates on its own output) produce a 50x token multiplier over 10 cycles. Multi-agent architectures show a 77x increase in input tokens compared to single-agent approaches. A single software engineering task using an unconstrained agentic workflow costs $5-8 in compute. When the output of those expensive workflows is workslop — reports requiring full human rewrite, code introducing more bugs than it fixes, customer service interactions that escalate more tickets than they resolve — the enterprise has consumed enormous compute to produce negative value. But from the hyperscaler’s dashboard, those tokens look identical to productive ones. Utilization is up. Revenue per customer is growing. The ratchet tightens.

The Counter-Model: What the Ratchet Was Built For

The technology works when the architecture is right. This is not a hypothetical — it is observable, measurable, and accelerating.

Start with the numbers that matter most: revenue per employee. Analysis of AI-native firms — companies that built their products, workflows, and organizational structures for AI from inception — shows average revenue per employee of $3.48 million. The comparable figure for established SaaS companies — Salesforce, Adobe, ServiceNow — is approximately $611,000. That is a 5.7x efficiency gap, and it is widening. Dario Amodei, CEO of Anthropic, predicted with 70-80% confidence that the first billion-dollar company with a single human employee will emerge by 2026. Sam Altman has disclosed a betting pool among tech CEOs tracking the same milestone. Y Combinator partner Jared Friedman reported that 25% of the Winter 2025 batch have codebases that are approximately 95% AI-generated — a figure that carries the caveat of founder self-reporting rather than audited measurement, but which nonetheless signals the direction of architectural intent.

The difference between these firms and the legacy enterprises burning tokens on workslop is not tool adoption. It is architecture.

Consider Cursor, the AI-first IDE that reached $100 million in annual recurring revenue within its first year — among the fastest SaaS growth trajectories ever recorded. Cursor was not built by bolting AI onto an existing code editor. It was architected from the ground up with whole-codebase indexing: the AI has access to the entire project structure, not just the open file. This architectural choice — expensive in development, trivial in concept — enables multi-file refactoring that bolt-on competitors like GitHub Copilot structurally cannot match. When a developer asks Cursor to rename a function, it propagates the change across every file that references it. When a developer asks Copilot, it autocompletes within the current context window. The technology is the same. The architecture makes it different tools entirely.

The same pattern appears in healthcare. AI-native startups Abridge and Ambience have captured roughly 70% of net new revenue in the medical AI scribe market. Abridge reached $100 million in ARR with approximately 30% market share; Ambience hit $30 million ARR at a valuation exceeding $1 billion. They did this despite Microsoft’s Nuance being deployed in 77% of U.S. hospitals. The incumbents have distribution. The AI-native firms have architecture — systems designed from day one to process clinical conversation into structured medical records, rather than retrofitting transcription features onto legacy electronic health record platforms.

At the enterprise scale, the architectural advantage is already producing measurable results even inside large organizations willing to do the prerequisite work. Amazon’s internal deployment of its Q tool for Java upgrades reduced migration timelines from 50 developer-days to hours per application. A five-person team upgraded 1,000 production Java applications in two days — with 79% of AI-generated transformations implemented without changes. CEO Andy Jassy reported the effort freed the equivalent of 4,500 developer-years of work and saved $260 million. But critically, this was possible because Amazon had documented its Java codebases extensively enough for AI to reason about the migration logic. The documentation was the prerequisite. The AI was the accelerant.

Stripe offers another window into what AI-native workflows look like inside a company that invested in the architectural foundation. Engineers at Stripe now merge over 1,000 AI-generated pull requests per week — code generated entirely by AI agents, reviewed by humans, but written by no human hand. The key: Stripe’s codebase was already structured for this. Modular, well-documented, explicitly designed so that AI could reason about component boundaries and system behavior. They did not achieve this by purchasing better AI tools. They achieved it by building the architecture that makes AI tools productive.

These firms share architectural principles that their legacy counterparts overwhelmingly lack. Documented codebases where AI can reason about why decisions were made, not just what code exists. Modular system design where components have clean boundaries and explicit interfaces. Outcome-driven workflows organized around user problems rather than org chart territories. Continuous deployment cycles measured in hours rather than quarters. The contrast with legacy enterprise is not incremental — it is categorical. Legacy organizations were designed for human coordination: approval chains, information brokers, departmental boundaries. When you bolt AI agents onto that structure, the agents inherit the dysfunction. They cannot reason about systems nobody documented. They cannot optimize workflows nobody mapped. They generate workslop because the architecture gives them no foundation for generating anything else.

This is the crux: the AI-native firms are the existence proof that the infrastructure investment is justified. Cursor’s growth, Stripe’s throughput, Amazon’s Q migration — these validate the thesis that AI creates genuine productivity gains when the architecture supports it. But these firms represent a small fraction of total compute consumption. The ratchet was built for them. It is sustained by everyone else.

The Convergence: How Bad Architecture Sustains the Ratchet

The technology works. That fact is precisely what makes the ratchet so dangerous.

If AI were vaporware — if the models could not code, could not analyze, could not reason — the infrastructure spend would collapse under its own weight. But the technology demonstrably works for firms with the right architecture. Cursor’s growth curve proves it. Stripe’s weekly AI pull request volume proves it. Amazon’s Java migration proves it. This creates a problem that pure skepticism cannot capture: the demand is real and artificial simultaneously, depending on who is generating it.

Here is where the historical parallel becomes useful, not as analogy but as structural precedent. The late-1990s telecom fiber buildout invested over $500 billion in infrastructure — 80 million miles of fiber optic cable. The underlying technology worked: fiber optic transmission was and remains the backbone of modern telecommunications. The demand for bandwidth was real and would eventually materialize far beyond what even optimists projected. But in the build cycle, approximately 85% of that fiber remained dark, unused. The build was sustained not by productive demand but by the narrative of demand: Internet traffic doubles every three months, bandwidth will always be the bottleneck, whoever builds the most pipe wins. The unwinding took six years, destroyed $2 trillion in market value, and produced the largest corporate losses in history. JDS Uniphase alone lost $56.1 billion in fiscal 2001.

The AI ratchet replicates this structure with one critical intensifier: unlike dark fiber, the AI infrastructure is not sitting idle. It is being used. Enterprise agentic deployments are consuming tokens at exponentially growing rates. But the question the telecom bust should have taught us to ask is not “is the infrastructure being used?” It is “is the use producing proportional value?” Dark fiber was obviously wasteful. Workslop is not obvious at all — it registers as utilization, generates revenue, and fills dashboards with activity metrics that look indistinguishable from productive work.

When hyperscalers tell Wall Street that their markets are “supply-constrained, not demand-constrained,” the evidence they cite is physical: electricity availability, data center construction timelines, transformer delivery schedules. Amazon CEO Andy Jassy stated that “as fast as we’re adding capacity, we’re monetizing it.” This conflates capacity filling with value creation. A data center running at 85% GPU utilization is not necessarily a data center producing 85% of its theoretical value. If half the tokens processed are generating workslop — content that will be discarded, code that will be reverted, analyses that will be redone by humans — then utilization is a misleading metric. But it is the metric Wall Street rewards. And critically, Wall Street has not developed systematic quality-adjusted utilization metrics. Analyst commentary focuses on capacity additions and occupancy percentages, not business value per token. AI-related services are expected to deliver only about $25 billion in revenue in 2025 — roughly 4% of what hyperscalers are spending on the infrastructure to deliver them.

The feedback loop is self-reinforcing. Bad enterprise architecture generates exponentially more tokens per unit of useful output. Those tokens consume compute. The compute registers as utilization. The utilization justifies the capex. The capex builds more compute. More compute enables more poorly-architected deployments by enterprises that still have not documented their codebases, still have not defined the problems they are solving, still have not redesigned workflows for AI. Each turn of the ratchet locks tighter. And unlike the telecom bust, where dark fiber was at least visibly idle, the AI ratchet is invisible — because the waste and the value flow through the same pipes and show up as the same metric on the same quarterly earnings call.

The Century Bond as Epitaph

A company financing 18-36 month depreciating assets with 100-year debt is not making an investment decision. It is making a permanence claim — asserting that AI infrastructure is a utility, as durable as the University of Oxford or a regulated French electricity provider. The pension funds buying the century bond at 9.5x oversubscription are pricing in the same assumption: that Alphabet will not just exist in 2126 but will generate sufficient cash flow to service this debt for a century.

The base rate is not encouraging. Approximately 0.5% of companies survive 100 years. The average S&P 500 tenure has collapsed from 61 years in 1958 to 18 years today. Technology companies face even steeper odds — the sector’s 10-year survival rate is approximately 29%. Alphabet is betting that it belongs in the 0.5% club while operating in the most competitively volatile sector of the economy, financing assets that become obsolete on 18-month cycles, and consuming nearly 100% of its operating cash flow to stay in the race.

The century bond crystallizes the ratchet. Once the debt is issued, the growth must continue — not because the market demands it, but because the debt requires it. Alphabet must generate sufficient cash flow for 100 years to service instruments it issued to build infrastructure that depreciates in two. The ratchet does not care whether the customers using that infrastructure are producing value or workslop. It only requires that they keep consuming tokens.

The greater fool in this structure is not the last investor or the last buyer of compute. It is the organization that deployed AI at scale without first defining the problem it was solving — without documenting why its systems work the way they do, without architecting for the technology it was adopting, without asking whether its org chart was a solution to a coordination problem or a monument to legacy politics. These organizations are the demand that sustains the ratchet. When they stop — through budget cuts, project cancellations, or the slow realization that performance theater is not a business strategy — the ratchet will have nothing left to turn against.

The technology works. The architecture, for most, does not. And the $690 billion bet is that nobody will notice the difference until the debt is issued, the tokens are burned, and the ratchet has already turned past the point of return.

Ask questions about this content?

I'm here to help clarify anything