Research compiled from NBER, McKinsey, EY, KPMG, BCG, OpenAI, CRA, Bloomberg, BLS, Lawfare, and Carnegie Endowment primary sources

How the Capability Gap Makes the Ratchet Invisible

On February 22, 2026, a fictional recession crashed real markets. Citrini Research's "The 2028 Global Intelligence Crisis" — a speculative scenario modeling AI-driven economic collapse — contributed to an estimated $300 billion sell-off, an 800-point Dow decline, and IBM's worst single-day drop in 25 years. [Measured] The dominant response from analysts, economists, and commentators converged on a single reassurance: AI adoption is slow, the economy has time, and the gap between AI capability and economic integration creates opportunity rather than danger.

The gap is real. It is measurable. And it is the most dangerous feature of the current transition — not because it delays the damage, but because it makes the damage invisible until it becomes irreversible.

This essay names the mechanism: the Dissipation Veil. The capability-dissipation gap — the measurable lag between what AI can do and what the economy has productively integrated — is not a protective buffer that buys time for institutional adaptation. It is the perceptual mechanism by which the Ratchet operates without triggering political resistance. The gap ensures displacement presents as structural drift — budget reallocation, hiring freezes, pipeline exclusion — rather than acute crisis. This positions it in exactly the category of labor market disruption that the political system has historically failed to address for decades.

The Dissipation Veil is not a new mechanism. It is the name for the relationship between existing mechanisms — the Ratchet, the Workslop Ceiling, Structural Exclusion, the Wage Signal Collapse, the Adversarial Equilibrium Trap, and the Aggregate Demand Crisis — that explains why the transition proceeds without triggering resistance. The reason nobody sees the Ratchet turning is that the dissipation gap makes it look like normal business friction.

Confidence calibration: 60–70% that the Dissipation Veil is the primary mechanism preventing political activation on AI labor displacement, rather than ideological opposition or institutional incapacity alone. The China Shock precedent — 17 years from displacement onset to major policy action — raises confidence. The SAG-AFTRA strike precedent, where organized labor successfully mobilized around AI-specific threats, lowers it. The binding uncertainty is whether the structural presentation of AI displacement will eventually trigger a reclassification event, as the China Shock ultimately did, or whether the diffuse and individually explicable nature of the displacement is categorically different from prior structural disruptions.

Part I: The Event That Tested Whether Fiction Could Break Reality

On February 22, 2026, James van Geelen of Citrini Research and Alap Shah published "The 2028 Global Intelligence Crisis" — a speculative scenario written from the fictional vantage point of June 2028, describing how AI-driven labor displacement cascades from sector-specific disruption through private credit contagion to systemic financial crisis. [Measured] The piece was framed explicitly as a thought exercise, not a prediction. Van Geelen later told Bloomberg he was "shocked" by the market reaction.

The reaction was substantial. The piece accumulated approximately 16 million views on X. [Estimated] Michael Burry — the investor immortalized in The Big Short — posted "And you think I'm bearish" alongside a direct link to the research, calling it "brilliant and heartfelt." [Measured] The Wall Street Journal covered it through a live-market card. Bloomberg, CNBC, Reuters, Barron's, and Fortune all carried the story. Jim Cramer dismissed it as "a dystopian fairy tale." [Measured]

The market response was measurable: the Dow fell over 800 points on Monday, February 24, closing at the day's low with only 27% of stocks gaining ground. DoorDash, American Express, KKR, and Blackstone each dropped more than 8%. [Measured] IBM's 13.2% decline — its worst single-day performance since 2000, erasing $31 billion in market value — was primarily driven by Anthropic's concurrent announcement that Claude Code could automate COBOL modernization, directly threatening IBM's legacy services business. [Measured] The Citrini piece amplified the broader AI-disruption sentiment, but IBM's collapse was an independent catalyst. The IGV ETF — iShares Expanded Tech-Software Sector — had already hit a 52-week low of $79.65 earlier that month, down 27.1% year-to-date, with top holdings averaging 40% off all-time highs. [Measured] The Citrini piece did not create the conditions for a software sell-off. It narrated them.

What followed was more revealing than the crash itself: the reassurance narrative.

Michael Bloch — partner at Quiet Capital, formerly one of the first 50 employees at DoorDash — published "The 2028 Global Intelligence Boom" within 48 hours, mirroring Citrini's format with optimistic conclusions. His core argument: AI agents would return $8,000–$12,000 per household per year in services spending currently devoted to navigating complexity. Pain in SaaS and middleman businesses was being confused with broader economic collapse. He projected the S&P crossing 12,000 and the Nasdaq above 40,000. [Measured]

Alex Imas, a professor at the University of Chicago Booth School of Business, had published a Substack analysis — "Can advanced AI lead to negative economic growth?" — on his newsletter Ghosts of Electricity. Using an island-economy parable with 100 workers and 10 capital owners, he modeled how full automation could theoretically collapse demand to satiated owner consumption. His conclusion: "Probably not." The conditions needed for growth to actually turn negative were "likely too unrealistic to hold in practice." [Measured]

Citadel Securities' Frank Flight argued that Indeed software engineering postings were rising 11% year-over-year and cited S-curve adoption patterns. Noah Smith called the piece "just a scary bedtime story." Claudia Sahm — the economist whose eponymous recession indicator has become a standard forecasting tool — offered the sharpest observation: "Gradual, limited job losses will be the hard one to get policymakers to focus and act." [Measured]

Sahm was identifying the Dissipation Veil without naming it. And the broader reassurance narrative was performing exactly the function this essay describes: the gap between AI capability and economic integration was being cited not as a structural feature that prevents detection but as evidence that the system is safe.

The most analytically sophisticated version of this reassurance came from a widely circulated YouTube analysis by Nate B. Jones — former Head of Product at Amazon Prime Video, now a prominent AI strategy commentator with approximately 127,000 subscribers. Jones introduced the "capability-dissipation gap" framework: two curves, one exponential (AI capability), one flatter (societal integration), with the gap between them as the site of opportunity. His prescription — exploit the gap, build AI fluency — treats the gap as a stable window during which individuals and institutions can adapt. [Measured]

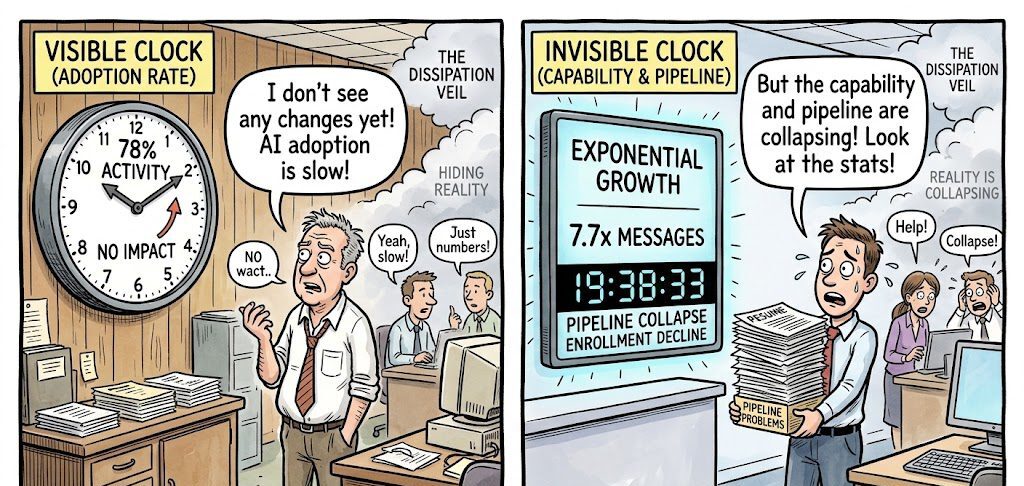

The framework is observationally correct. The interpretation is where this essay diverges. Jones sees two curves and concludes the slower one protects us. The Theory of Recursive Displacement sees two clocks — one visible, one invisible — and concludes the slow clock is hiding the fast one.

Part II: The Measurement Illusion

The reassurance narrative depends on a specific reading of the adoption data: AI is being adopted slowly, most deployments fail, therefore the disruption is far away. The data cited is real. The interpretation rests on a measurement failure identical to the one documented in the Ratchet.

Start with the headline statistics as they are typically presented. McKinsey's 2025 Global Survey reports that 78% of organizations "use AI" — but when McKinsey published its separate Superagency report in January 2025, surveying 238 C-level executives with a five-stage maturity model, only 1% of organizations qualified as "Mature," meaning AI was fundamentally changing how work was done and driving substantial business outcomes. [Measured] These are two different surveys with different samples and methodologies, a distinction that matters: the 78% figure leaves "adoption" deliberately undefined, encompassing everything from a single employee experimenting with ChatGPT to enterprise-wide integration. The 1% uses a specific operational threshold. Citing them as a pair implies a single survey found a 77-point gap between adoption and maturity. The reality is that two different instruments measuring different things at different levels of specificity both point in the same direction — toward a chasm between activity and value — but the precision of the implied contrast is overstated.

The NBER working paper that landed in February 2026 provides the most rigorous cross-national data available. "Firm Data on AI" (Working Paper No. 34836, by Yotzov, Barrero, Bloom, Bunn, Davis, Foster, Jalca, Meyer, Mizen, Navarrete, Smietanka, Thwaites, and Wang) draws from stratified firm samples across the United States, United Kingdom, Germany, and Australia — approximately 6,000 CFOs and CEOs. [Measured] The headline findings: roughly 69% of firms report active AI use (78% in the U.S.), but over 80% report zero impact on either employment or productivity over the past three years. More precisely: approximately 90% report no employment impact, and approximately 89% report no measurable productivity change. The forward-looking forecasts are modestly optimistic — firms expect a 1.4% productivity boost and 0.8% output increase over the next three years — but these are expectations, not measurements, and expectations in the AI space have systematically exceeded realization.

The pattern replicates across every major survey. EY's 2025 Work Reimagined Survey (15,000 employees and 1,500 employers across 29 countries) found that 88% of employees use AI at work to some degree — but only 37% use it daily and only 5% qualify as advanced users who blend multiple tools to unlock meaningful productivity gains. [Measured] Most usage is basic: search (54%), summarizing documents (38%). Only 28% of organizations have positioned employees to achieve transformative business impact. KPMG's AI Quarterly Pulse Survey, tracking approximately 130 U.S. C-suite leaders per quarter from organizations with over $1 billion in annual revenue, provides perhaps the clearest demonstration of definitional inflation: agentic AI deployment reported at 11% in Q1 2025, surging to 42% by Q3, then falling to 26% in Q4 — not because deployments were pulled back, but because leaders adopted more sophisticated definitions of what constitutes a true agent. [Measured] BCG's October 2024 survey of 1,000 CxOs across 59 countries found 4% of companies generating substantial value from AI and 74% showing no tangible returns. [Measured] OpenAI's own data — drawn from over 1 million business customers and approximately 7 million paid workplace seats — reveals that frontier workers at the 95th percentile send 17 times more coding messages than the median employee, and frontier firms generate approximately 7 times more messages to Custom GPTs than the median enterprise. [Measured]

These are not data points on an adoption curve. They are a workslop distribution.

The Ratchet essay documented how the Workslop Ceiling operates at the enterprise level: wasteful tokens are indistinguishable from productive ones on hyperscaler dashboards. The same mechanism operates one level up. Enterprise AI surveys report adoption as a binary: the organization either "uses AI" or it does not. But the gap between using AI and productively integrating AI is precisely where the measurement fails. McKinsey explicitly acknowledges leaving "adopted" undefined. No major enterprise survey — McKinsey, Deloitte, Gartner, KPMG, or BCG — systematically distinguishes between tool purchase, pilot deployment, workflow integration, and measurable productivity impact as separate adoption stages with population distributions. [Estimated] The closest approximation is McKinsey's Superagency maturity model (Nascent 8%, Emerging 39%, Developing 31%, Expanding 22%, Mature 1%) and BCG's four-tier classification (Emerging through Future-Built). But these are reported as aggregates, not as explicit funnels.

MIT's NANDA initiative reported that 95% of enterprise AI pilots deliver zero measurable P&L impact — a figure that has become one of the most-cited statistics in the AI adoption discourse. The statistic requires a caveat: the study uses a binary threshold (zero measurable P&L impact), and reports about the study cite conflicting sample sizes — some reference 52 structured interviews, others 150 interviews and 350 survey responses. [Estimated] The threshold is severe: a pilot that improves employee satisfaction or reduces turnaround time but has not yet generated attributable revenue or cost savings registers as a failure. The complementary IDC finding — that for every 33 proofs of concept launched, only 4 reach production — measures a different thing (per-POC success rate within individual organizations) than S&P Global's finding that the average organization scraps 46% of AI proofs of concept before production (a portfolio-level metric across surveyed firms). [Measured] Both point toward the same phenomenon but from different angles, and combining them without noting the methodological difference creates false precision.

The analytical contribution is this: the 78% adoption headline — the number that reassures markets, informs policy, and anchors the "we have time" narrative — is the organizational equivalent of 85% GPU utilization. It measures activity, not value. The Ratchet tightens on metrics, not on value. The dissipation gap is the Workslop Ceiling operating at the macroeconomic measurement level. And the reassurance narrative — "adoption is slow, therefore disruption is distant" — is built on a measurement instrument that cannot distinguish between organizations that have transformed their operations and organizations that have purchased a subscription.

Part III: The Budget Channel

The dissipation gap does not slow displacement. It redirects the displacement channel from visible task substitution to invisible budget reallocation.

The mechanism is straightforward. Organizations are increasing AI spending — budgets growing from approximately 3% to 5% of annual expenditures, with the share spending half or more of total IT budgets on AI expected to quintuple from 3% to 19%. [Measured] But 80% or more of these organizations report zero measurable impact on productivity or employment. The money has to come from somewhere. In organizational budgets, "somewhere" is the labor line.

Oxford Economics identified this precisely: layoffs are occurring "to finance experiments in AI" rather than because "AI is replacing workers." [Estimated] The distinction is critical. Workers are not displaced because a machine did their job. They are displaced because the budget that funded their position was reallocated to fund a technology deployment that, in four out of five cases, has not yet produced measurable value. The dissipation gap ensures that the displacement occurs through an invisible channel — not task substitution, which would be attributionally clear, but budget reallocation, which passes through enough organizational intermediaries that the causal chain dissolves.

The corporate evidence is now substantial enough to trace the budget channel in named firms.

Klarna provides the most extensively documented case. CEO Sebastian Siemiatkowski publicly and repeatedly linked headcount reduction to AI deployment. The company went from approximately 7,000 employees in 2022 to roughly 3,000 by 2025. An AI assistant deployed through an OpenAI partnership handled 2.3 million conversations in its first month, performing work equivalent to 700 full-time customer service agents. [Measured] The company saved approximately $10 million annually on marketing alone using AI. But in May 2025, Klarna began re-hiring human customer service representatives after acknowledging the AI pivot led to declining service quality — what the AI researcher Gary Marcus dubbed "the Klarna Effect." [Measured] The budget channel operated: headcount was cut, AI spending absorbed the freed resources, and when the deployment underperformed, the headcount was already gone.

Salesforce demonstrates the budget-rebalancing mechanism explicitly. CEO Marc Benioff stated in August 2025: "I was able to rebalance my head count on my support. I've reduced it from 9,000 heads to about 5,000 because I need less heads." [Measured] The company later clarified this was "rebalancing/redeployment" — approximately 4,000 experienced staff reassigned from support into sales roles. The budget channel is visible: AI agent deployment reduces support headcount need, the freed budget is reallocated, the displaced workers are absorbed internally. In firms with less capacity to redeploy, the rebalancing produces layoffs rather than reassignment.

IBM offers the large-enterprise case. CEO Arvind Krishna told the Wall Street Journal that IBM had replaced hundreds of HR employees with its internal AskHR chatbot and told Bloomberg the figure would approach 7,800 back-office jobs over time. Krishna described the layoffs as "a direct outcome of automation." [Measured] IBM simultaneously increased investment in AI and quantum computing while cutting back-office headcount — the budget channel in its most transparent form.

Oracle represents the prospective version. TD Cowen reported in January 2026 that Oracle was considering cutting 20,000–30,000 jobs specifically to "free up $8 billion to $10 billion in cash flow" to fund AI data-center expansion. [Estimated] The budget channel is stated explicitly: headcount reduction as a financing mechanism for AI infrastructure, not as a consequence of AI task substitution. Additional cases include Workday (1,750 jobs to "reallocate resources toward AI investments"), Dropbox (528 employees to refocus around AI tools), and Fiverr (30% workforce reduction repositioning as "AI-first"). [Measured]

A caveat matters here: Deutsche Bank analysts coined the term "AI redundancy washing" in January 2026, warning that companies may attribute cuts to AI that are actually driven by pandemic overhiring corrections, competitive pressure, or conventional restructuring. [Estimated] The Challenger, Gray & Christmas full-year 2025 data reported 54,836 AI-cited job cuts — but this represented less than 5% of total layoffs (1,206,374), with DOGE-related cuts (293,753) and market/economic conditions (253,206) dominating. [Measured] The essay's argument is not that all budget-channel displacement is AI-driven. It is that the budget channel — whatever mix of motivations drives it — produces displacement that is attributionally opaque. Whether a job was cut "because of AI" or "because the department was restructured" is, from the displaced worker's perspective, a distinction without a practical difference. And from the political system's perspective, it is a distinction that prevents the formation of a legible constituency.

The tax code amplifies the budget channel asymmetry. Under the One Big Beautiful Bill Act, signed July 4, 2025, organizations can expense a $1 million AI server investment in the year purchased through 100% bonus depreciation, yielding an immediate $210,000 tax benefit at the 21% corporate rate. If the investment additionally qualifies for the Section 41 R&D tax credit, the effective after-tax cost drops further — potentially to approximately $590,000. [Measured] For a $1 million worker retraining program, the employer can deduct the full amount as an ordinary business expense under Section 162, yielding the same $210,000 headline benefit. But six distinct IRC restrictions create friction that the hardware purchase does not face: the Section 127 annual cap of $5,250 per employee (set in the 1980s, not inflation-adjusted until OBBBA provides adjustments starting for tax years after 2026), nondiscrimination requirements preventing targeting of training to highest-value employees, working condition fringe limitations restricting tax-free treatment to skills maintaining the current position rather than retraining for new roles, no equivalent to bonus depreciation for human capital, double-dipping prohibitions, and expense exclusions for meals, lodging, and transportation associated with training. [Measured]

The critical asymmetry is not in the headline deduction — both technically yield $210,000 — but in timing acceleration, credit stacking, and administrative friction. Acemoglu, Manera, and Restrepo found the effective tax rate on capital invested in equipment and software has declined to approximately 5%, while effective labor taxes stand above 28.5%. [Measured] Elliott Davis tax advisory stated explicitly: "The OBBBA codifies a new economic reality: U.S. tax policy now actively subsidizes the move from human labor to AI." [Measured]

The budget channel converts slow adoption into invisible displacement. The presenter sees slow adoption and concludes workers are safe. The Theory sees slow adoption and identifies the channel through which workers are displaced without anyone — including the displaced workers themselves — being able to point to AI as the cause.

Part IV: The Invisibility Gradient

The budget channel displaces workers. The Dissipation Veil prevents the political system from seeing the displacement. The mechanism connecting these is what might be called an invisibility gradient: the gap between the speed at which acute crises trigger political response and the speed at which structural shifts fail to.

The data on political response speed to acute crises is unambiguous. After Lehman Brothers filed for bankruptcy on September 15, 2008, the Emergency Economic Stabilization Act (TARP) was signed on October 3 — 18 days, and that included an initial House rejection. [Measured] After the WHO declared a pandemic on March 11, 2020, the CARES Act was signed on March 27 — 16 days. [Measured] Acute crises produce visible suffering, clear causation, media attention, and political pressure. The political system responds with extraordinary speed when these four preconditions are met simultaneously.

The data on political response to structural labor shifts tells the opposite story. The productivity-wage gap has persisted since 1979 — 47 years without comprehensive legislative response. [Measured] The gig economy has existed since Uber's founding in 2009 — approximately 17 years without a federal worker classification framework. [Measured] The Biden DOL's 2024 rule tightening independent contractor definitions under the FLSA was an administrative interpretation, not comprehensive legislation. Structural shifts do not produce the four preconditions for acute response. The suffering is real but distributed. The causation is complex and contestable. The media attention is intermittent. The political pressure is diffuse.

The dissipation gap ensures AI displacement presents as structural, not acute. No single event triggers the acute-response mechanism. The displacement is distributed across thousands of firms making independent budget decisions, none of which individually constitutes a political crisis. The Carnegie Endowment confirmed this framing in February 2026: "AI disruption is unlikely to manifest as sudden mass redundancy. It is more likely to take the form of incremental task substitution and workflow automation that progressively reduce the scope of existing roles. Jobs would be hollowed out before being eliminated, creating prolonged insecurity rather than immediate unemployment." [Estimated] (This is a commentary by Amanda Coakley, a Europe's Futures Fellow at the Institute for Human Sciences in Vienna, published on Carnegie Europe's blog — a well-informed analytical perspective, not an institutional research finding.)

The political infrastructure to detect and respond to structural AI displacement does not exist. As of February 2026, no G7 country has established an AI-specific labor displacement tracking mechanism. [Measured] The U.S. Bureau of Labor Statistics does not track AI-specific displacement. The Biden-era Executive Order on AI (October 30, 2023) directed reporting on workforce impacts, but the Trump administration revoked it. The UK AI Safety Institute focuses on AI safety, not labor. Germany's Institute for Employment Research has published displacement projections but has no ongoing monitoring system. France, Japan, Canada, and Italy have no identified mechanisms. The EU AI Act regulates deployment but does not track displacement. The 2025 G7 Leaders' Statement on AI for Prosperity mentioned "preparing workers for AI-driven transitions" but established no monitoring infrastructure. [Measured]

The Warner-Hawley bill — the AI-Related Job Impacts Clarity Act (S. 3108, 119th Congress), introduced November 5, 2025 — would require quarterly disclosures to the Secretary of Labor from publicly traded companies, federal agencies, and certain private companies, covering employees laid off due to AI replacement, new AI-related hires, positions left unfilled due to AI automation, and individuals being retrained. [Measured] The DOL would publish quarterly summaries and biannual net-impact analyses. It has been read twice and referred to the HELP Committee. No committee vote has occurred. [Measured]

The analytical point is not simply that political response is slow. It is that the dissipation gap prevents the preconditions for political response from forming. Acute crises produce visible suffering, clear causation, media attention, and political pressure. The Dissipation Veil prevents all four. Workers displaced through budget reallocation do not appear in AI-specific layoff statistics — because no AI-specific layoff statistics exist. The causal chain from AI spending to headcount reduction passes through enough organizational intermediaries that it dissolves before reaching attribution. The suffering is real but individually explicable: "my company restructured," not "AI took my job." Without the Warner-Hawley tracking mechanism or its equivalent, the phenomenon cannot be measured. Without measurement, it cannot become politically salient. Without salience, it cannot trigger response.

The China Shock provides the historical precedent. Initial displacement began with China's WTO accession in 2001. The first rigorous academic documentation — Autor, Dorn, and Hanson's "The China Syndrome" — appeared in the American Economic Review in 2013, a 12-year lag from displacement onset to systematic research. [Measured] The political mobilization triggered by accumulated economic distress arrived during the 2016 presidential campaign, with tariffs imposed starting in 2018 — a 17-year lag from displacement onset to major policy action. [Measured] Autor and colleagues concluded that existing U.S. policies "failed to adequately insulate workers." The Trade Adjustment Assistance program, the primary federal response, was chronically underfunded; the de facto buffers were general unemployment insurance and Social Security disability claims, which surged as a coping mechanism in affected communities.

The AI displacement timeline is structurally similar — early-stage, diffuse, attributionally ambiguous — but the dissipation gap adds a layer the China Shock lacked. Chinese import competition was at least measurable: trade data, factory closures, and regional employment statistics created an evidentiary base that accumulated over time. AI budget-channel displacement is measured by instruments that cannot distinguish it from conventional restructuring. The evidence may never accumulate in a form the political system can process.

Part V: The Two Clocks

The most dangerous implication of the Dissipation Veil: the gap creates the perception that there is time while the irreversible mechanisms continue operating beneath the surface.

The presenter's advice — exploit the gap, build AI fluency — treats the gap as a stable window. But the mechanisms documented across this framework do not pause because adoption is slow. They operate on a different clock.

The visible clock — the one that governs task substitution, revenue disruption, and financial contagion — runs at the dissipation rate. This is the clock the presenter tracks. It is genuinely slow. 78% of organizations "use AI" while 80% report no impact. 95% of pilots fail to reach production. The visible economic transformation is glacial by the standards of the technology's underlying capability.

The invisible clock — the one that governs competence pipeline degradation, wage signal collapse, and expertise atrophy — runs at the capability rate. The Competence Insolvency does not wait for organizations to successfully deploy AI. It operates the moment prospective workers observe a flattened earnings curve and redirect their human capital investment.

The enrollment data confirms the invisible clock is running. The CRA CERP Pulse Survey (October 2025, 134 responses from 130 institutions) found 62% of academic units reported declining enrollment for the 2025–26 academic year, with the average decline at 11–15% and 31% of declining units reporting drops greater than 20%. [Measured] The National Student Clearinghouse independently confirmed that CS enrollment declined across all award and institution types in Fall 2025: -14.0% at the graduate level, -3.6% at the undergraduate level at primarily baccalaureate institutions. [Measured] This occurred against a backdrop of overall postsecondary enrollment growth (total up 1.0%). The prior Taulbee Survey still showed 9.9% growth for the 2023–24 academic year, meaning the reversal is sharp and recent. [Measured] The UC system reported CS enrollment at 12,652 undergraduates in 2025–26, down 6% from 2024 and 9% over two years — the first sustained decline since the dot-com bust. [Measured]

The pipeline exclusion documented in Structural Exclusion operates independently of the adoption rate. Stanford's Digital Economy Lab ("Canaries in the Coal Mine," August 2025; Brynjolfsson, Chandar, Chen; ADP payroll data covering millions of workers) found software developer employment for ages 22–25 declined nearly 20% from the late 2022 peak to July 2025, while employment for workers aged 30 and above grew 6–13% in the same period. [Measured] A Harvard/Revelio study (August 2025; 62 million workers, 285,000 firms) found junior employment in AI-adopting firms fell 7.7% relative to non-adopters within six quarters, driven by slower hiring rather than layoffs, concentrated among graduates from Tier 2 and Tier 3 schools. [Measured] Indeed Hiring Lab data confirms: software engineer postings down 49% from pre-pandemic levels, junior-level titles down 34%, and the share of tech postings requiring 5 or more years of experience rising from 37% to 42%. [Measured]

These dynamics do not require organizations to have integrated AI productively. They require only that organizations are spending on AI (budget reallocation), that AI capability is visible in the market (wage signal effects), and that prospective workers observe flattened career curves (enrollment response). All three conditions are met while 80% of firms report zero impact.

The emerging evidence on skill acquisition in AI-mediated environments sharpens the concern. Anthropic's own randomized controlled trial (January 2026; 52 mostly junior software engineers learning a new Python library) found that AI-assisted learners scored 17% lower on comprehension assessments — equivalent to nearly two letter grades — with the largest gaps on debugging questions. [Measured] The researchers identified a distinction between harmful strategies ("AI delegation," where learners outsource thinking to the AI) and beneficial ones ("conceptual inquiry," where learners use the AI to deepen understanding). The METR RCT (July 2025; 16 experienced developers, 246 real issues) found that experienced developers took 19% longer with AI tools despite expecting a 24% speedup — suggesting that the integration overhead can exceed the productivity gain even for experts. [Measured] Dakhel et al. (Journal of Systems and Software, 2023) concluded that "Copilot can become an asset for experts, but a liability for novice developers." [Measured]

The two-clock problem is the structural core of the Dissipation Veil. The presenter sees the slow clock and concludes there is time. The Theory of Recursive Displacement tracks the fast clock and sees the window closing. Both clocks are real. The slow one is visible. The fast one is not. And the gap between them is not a buffer — it is the veil that prevents the fast clock from triggering the response it requires.

Part VI: The Adversarial Equilibrium and the Services Deflation Thesis

The services deflation thesis — which represents the most analytically serious optimistic case against the displacement framework — holds that AI will make services cheaper, returning purchasing power to consumers and offsetting displacement effects. The strongest version of this argument combines the BIS finding that goods and services deflation has shown only weak association with output decline across 140 years of data (Borio, Erdem, Filardo, and Hofmann, BIS Quarterly Review, March 2015; dataset spanning 1870–2013 across 38 economies) [Measured], Erik Brynjolfsson's observation of a 2.7% U.S. productivity jump in 2025, and the Baumol cost-disease reversal argument: AI may finally break the structural resistance of services to productivity improvement. The BIS data is legitimate and the mechanism is theoretically sound. This essay does not dismiss it.

But the Adversarial Equilibrium Trap identifies a category of economic activity where the thesis structurally fails. In adversarial contexts — litigation, cybersecurity, regulatory compliance, competitive intelligence, talent acquisition — each party's incentive is not to minimize cost but to maximize relative advantage over the opposing party. When both sides adopt AI, costs do not fall to a new, lower equilibrium. They escalate to a new, higher plateau, as efficiency gains are consumed by competitive escalation rather than passed through to consumers.

Legal services provide the cleanest empirical demonstration. The ACC-Everlaw survey (657 in-house legal professionals across 30 countries, fielded June–July 2025) found that 59% of respondents reported "no noticeable savings yet" from outside law firms' use of AI. [Measured] Harvard's Center on the Legal Profession found that no AmLaw 100 firm anticipates reducing attorney headcount due to AI — even as individual task productivity gains exceed 100x on specific workflows. [Qualitative Interview Study, n=10] The e-discovery precedent is definitive: digitization was supposed to make document review cheaper, and instead it expanded discoverable material so dramatically that parties exploited it to impose greater burdens on opponents. RAND documented median per-case ESI production costs of $1.8 million, with three-quarters of respondents confirming that discovery costs had increased disproportionately since digitization. [Measured] AI is following the same pattern. Law firm technology spending is growing at nearly 10% annually while billing rates accelerate — the efficiency gains are additive to costs, not substitutive. [Measured]

The game-theoretic structure is a prisoner's dilemma that nests at three scales simultaneously: at the case level (each litigant must deploy AI or face asymmetric disadvantage), at the firm level (each law firm must adopt or lose competitive position), and at the infrastructure level (the hyperscalers supplying the tools must keep investing or lose market share). This nesting is structurally identical to the Ratchet — individually rational decisions producing collectively suboptimal outcomes — but applied across millions of adversarial proceedings per year rather than a handful of hyperscaler capex cycles.

The dynamic generalizes beyond legal services. In high-frequency trading, Budish, Cramton, and Shim (Quarterly Journal of Economics, 2015) found that speed-based competition has not reduced the size or frequency of arbitrage opportunities — it has only raised the bar for capture speed. [Measured] Profitability remained constant while required speed decreased from 97 milliseconds to 7 milliseconds. In cybersecurity, CrowdStrike's 2026 Global Threat Report found AI-enabled adversary operations increased 89% year-over-year, forcing proportional defensive investment. [Measured] In each domain, the adversarial structure consumes the efficiency gains that would otherwise reach consumers as lower prices.

The implication for the Dissipation Veil is twofold. First, the services deflation thesis — the strongest counter-argument to the Aggregate Demand Crisis — fails in every market with adversarial structure. The demand crisis does not require that AI fails to produce efficiency gains. It requires only that those gains are captured by competitive escalation rather than passed through to consumer prices. Second, the dissipation gap masks this distinction. Aggregate adoption surveys do not differentiate between domains where AI reduces costs (market-expanding sectors) and domains where AI escalates them (zero-sum sectors). The headline adoption number treats both as the same phenomenon — another layer of measurement contamination operating behind the Veil.

Part VII: What Would Prove This Wrong

The Dissipation Veil thesis specifies what would falsify it.

Falsification Condition 1: Budget-channel displacement proves attributionally transparent. If displaced workers accurately identify AI investment as the cause of their job loss in survey data, and media coverage consistently links budget reallocation to AI spending, then the Veil is not operating. This would be measurable via the BLS Displaced Workers Survey (biennial CPS supplement, though it currently lacks an AI-specific reason code), Challenger monthly AI-cited layoff reports, and systematic content analysis of earnings call transcripts. The Warner-Hawley bill, if passed, would create the first dedicated instrument. Leading indicator: Challenger monthly AI-cited layoff trends combined with JOLTS separation rates by sector. No dedicated study exists that asks displaced workers to choose between "AI/automation," "restructuring," "budget cuts," and "performance" as the cause and cross-references the employer's stated reason — this gap is itself evidence that the Veil's operation cannot currently be observed at the individual level.

Falsification Condition 2: Political response activates on structural presentation. If AI-specific labor displacement legislation passes in any G7 country within 18 months (by approximately September 2027) despite the structural — not acute — presentation of displacement, the political system is more responsive to diffuse signals than the thesis predicts. Directly observable through standard legislative monitoring. No proxy needed.

Falsification Condition 3: The adoption-productivity gap closes rapidly. If the share of organizations reporting measurable productivity impact from AI rises above 30% — from the current approximately 20% as measured by Deloitte, McKinsey's "high performer" cohorts, and PwC's Global CEO Survey — within 12 months, the dissipation gap is closing and the Workslop Ceiling is breaking. This would indicate the gap was a temporary pre-acceleration phase consistent with the Solow Paradox pattern, not a structural obscuring mechanism. Trackable through annual McKinsey, Deloitte, and BCG surveys, with a 6–12 month data lag.

Falsification Condition 4: The competence pipeline stabilizes despite the gap. If entry-level hiring in AI-exposed fields recovers to within 10% of 2023 levels, and CS/engineering enrollment growth turns positive, the invisible damage clock is not running independently of the visible adoption clock. Entry-level hiring is trackable through Indeed Hiring Lab weekly postings data in near-real-time. CS enrollment is trackable through the CRA Taulbee Survey (annual, published with approximately 12-month lag) and CERP Pulse Surveys. Leading indicator: Indeed weekly tech posting data for entry-level titles, which currently shows junior-level software engineer postings down 34% from pre-pandemic levels. [Measured]

What the Dissipation Veil Does Not Claim

Intellectual honesty requires specifying the boundaries.

The essay does not dismiss the services deflation thesis entirely. The BIS 140-year study finding weak association between goods and services deflation and output decline is legitimate empirical evidence. The question the Aggregate Demand Crisis essay poses is not whether deflation is harmful — it is who captures the surplus. If AI-driven services deflation returns $8,000–$12,000 per household as Bloch projects, the demand crisis may not materialize. The redistribution channel — not the deflation itself — is the key variable.

The essay does not dismiss the business formation data as noise. Census Bureau Business Formation Statistics for January 2026 show 532,319 business applications and 29,863 projected employer formations per month. [Measured] The 5.6% conversion rate is a steep funnel, but 29,863 projected new employer businesses per month is not zero. The Theory has documented why this is insufficient at scale — the gig economy income discount, the AI-native company's extreme revenue-per-employee advantage — but it should not pretend the signal does not exist.

The essay does not claim political response is impossible. The SAG-AFTRA strike of 2023 successfully extracted AI-specific concessions from major studios. The EU AI Act exists. The counter-model in the Theory of Recursive Displacement assigns 20–35% probability to Institutional Redirect — and in some domains, this range may be conservative. Claudia Sahm's observation is precisely right: the hard problem is not whether political response is possible but whether it activates on structural presentation. The Dissipation Veil thesis predicts a structural bias toward delay, not impossibility.

The Fallacy of Composition in the Information Environment

The Citrini event revealed something that the adoption data, by itself, cannot: the market is capable of responding to AI displacement as an acute signal. A fictional recession produced a real $300 billion sell-off in a single day. The information existed — the mechanisms, the data, the feedback loops — and when it was packaged as narrative rather than as statistics, it penetrated the political-financial system in hours.

The reassurance narrative that followed is the Dissipation Veil reasserting itself. The gap is real. Adoption is slow. There is time. Individually, each person who reads the adoption data and concludes the disruption is distant is making a reasonable inference from the available evidence. Collectively, this reasonable inference prevents the political system from seeing the structural damage — the pipeline degradation, the wage signal collapse, the competence atrophy — that is accumulating on a clock the adoption data does not measure.

This is the fallacy of composition applied to the information environment. Each firm that adopts AI unproductively contributes to the dissipation gap. Each analyst who cites the gap as evidence of safety reinforces the Veil. Each policymaker who looks at the adoption data and concludes there is no crisis defers the intervention that might address the damage before it becomes irreversible. No individual actor is wrong. The collective outcome is that the window for intervention closes while everyone watches a clock that does not track the mechanisms that matter.

The Veil is either operating or it is not. The falsification conditions specify how to tell. Track them.

Ask questions about this content?

I'm here to help clarify anything